Tag Archives: technology

Social Media Hydra

! This claim about election fraud is disputed

Social media platforms like Twitter and Facebook are taking action to reduce misinformation (or “information” depending on your preferred bias). But it’s become a game of whack-a-mole with new entrants flooding the market to capture “free speech” advocates. Sites such as Parler, MeWe, and Gab have gained popularity with the ‘Stop the Steal’ movement, which seeks alternative echo chambers. And despite regulators’ best efforts, this trend is unlikely to reverse anytime soon—cut one platform down, and two grow back in it’s place.

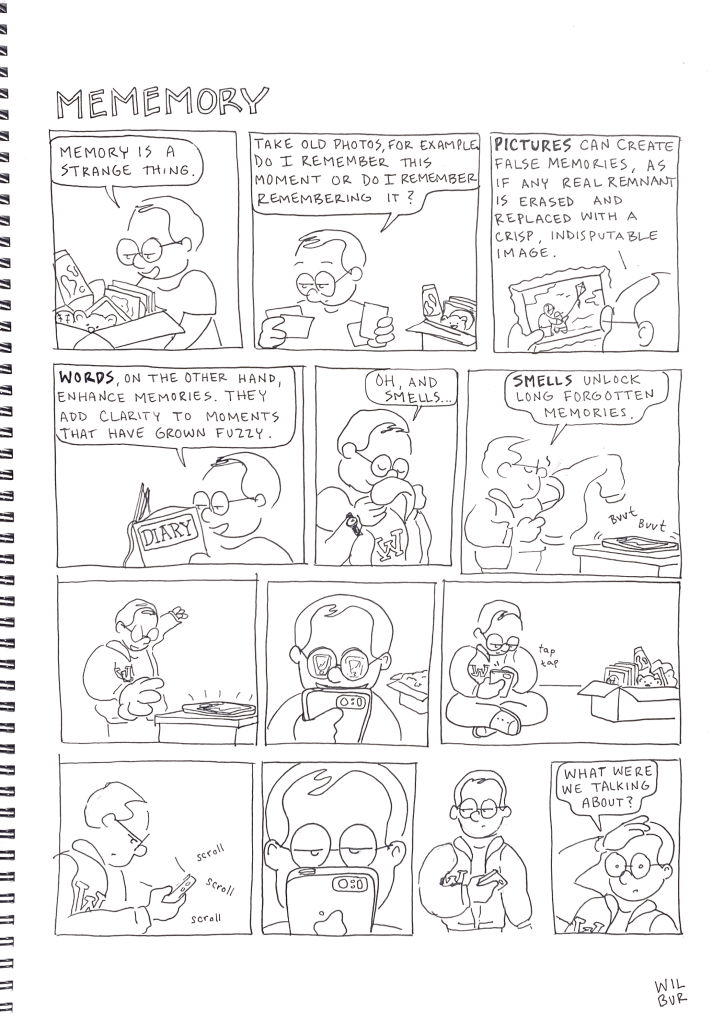

Meditations

Recently, personal matters have replaced doing research for my essays. But I’ve still been pondering about the world we live in and want to share those thoughts. Instead of a full essay this week, the following are four short musings/questions to get us thinking:

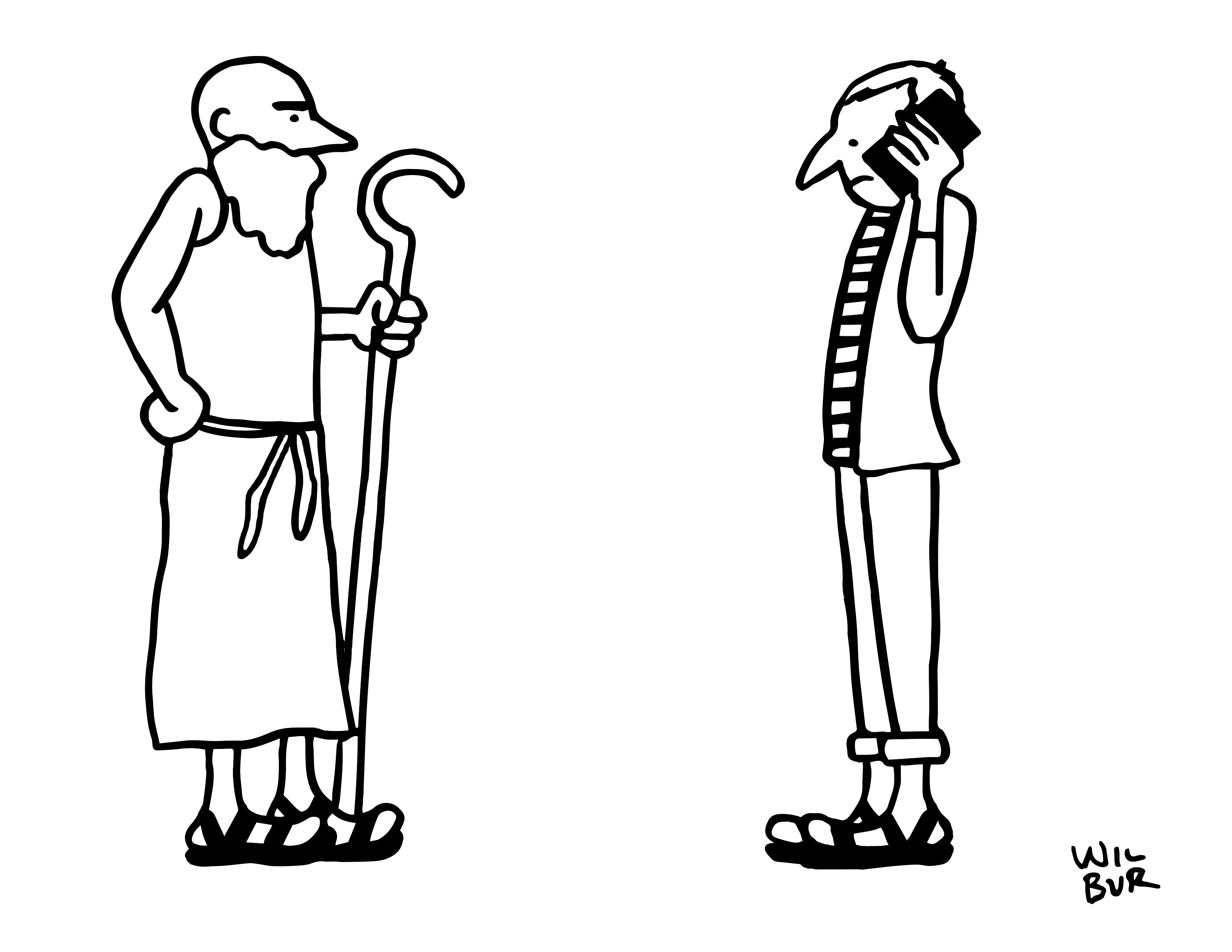

FASHION: Now that it’s summer, Teva sandals are everywhere. The terrible ’90s fashion statement is back in full force, but how are they still a thing? It’s like a hippie scourge on the community—an ancient fashion statement with modern velcro amenities. And don’t even get me started on wearing socks with sandals. It all begs the question: What’s the point of keeping up with fashion trends if nothing really changes for thousands of years?

BUSINESS: “…but how do they monetize it?” This was the only piece of a conversation I heard between two gentlemen in suits walking downtown. And what a question—a question that sums up so much of our current age. First, there’s how the question is asked. It’s at once a valid question and concocted jargon. Using the word “monetize” feels like contemporary boasting, like spitting out questions on ROI, A/B testing, or running lean—valid yet trite buzzwords to show that we “get” it, that we’re business savvy, that we ask the right questions in the right ways. In a strange way, it has become trendy to use pretentious business-speak. Second, there’s the economic dilemma of the question. In a world of “free” online content—a digital sharing glut—there seems to be this underlying (maybe unconscious) feeling that if we get enough users or followers, tap into the network effect like Zuckerberg did, then we make it rich. Simple. Except there’s still that nagging detail of how to grow a money tree from a foundation of nonpaying consumers. Google, a company notorious for asking tough interview questions, is cited as asking interviewees, “If ads were removed from YouTube, how would you monetize it?” And that’s really hitting the nail on the head, isn’t it? The business challenge of our era is that we, as customers, expect things to be free, but we, as businesspeople, do not know how “free” can sustainably make money (sorry, “be monetized”).

LANGUAGE: Horny is the word for feeling sexual arousal, but it’s hormones that cause the feeling. Wouldn’t it make more sense to say “hormy”?

HISTORY: Let’s assume Elon Musk’s vision of technologically-advanced humans plays out in the way he wants. We all become enhanced cyborgs living in a world of robots and super intelligent A.I. Who then will be the legends of today—the heroes that go down in the history books? If “history is written by the victors,” as Winston Churchill allegedly said, then will the superstars of our current era be remembered not as the Steve Jobs and the Elon Musks, but as the digital tools they created? It is not the climbing gear that gets the glory of being the first to climb Mt. Everest; it is the climber (but only because we are the victors—the tellers of the story). In Elon’s future, are modern humans merely the tools that machines will use to accomplish their historic feats? Will it be the implanted Neuralink brain chips of influential people that are remembered as the important historic figures rather than the people themselves?

Convenience, Community, or Both?

Every week, I get my groceries delivered. Perched up high on the twenty-eighth floor of a high-rise in downtown Chicago, grocery delivery is a practical choice. But it’s also a laughable luxury. Is it really too hard to patronize one of the four grocers in a four block radius surrounding our building? Is it too much to ask to walk the aisles of food rather than scroll through online images? Is it such a burden to walk down the street with “heavy” groceries using our able-bodies?

We are so far removed from the sources of our food that without the slightest hesitation we can click a few icons on a screen at home and the next day a man (yes, it’s always been a man) knocks on our door with bags of fruits, vegetables, and meat. There is no dirt under our fingernails, no long range weather forecast in the Old Farmer’s Almanac, no acknowledgement of nutrient cycles from livestock waste to crop fertilizer. But lamenting the down and dirty of the family farm is hardly relevant. Long gone are the days of tilling the fields and harvesting the season.

Today there is not even the friendly interaction with the cashier as we check out at the grocer; there is no shared space in the aisles of what feels like an outdated warehouse experience; there is no harmless banter at the delicatessen counter; nor is there the tactile and fragrant sensation of choosing our own produce. We are, as the below quote states, traveling in boxes (our homes, our cars, our offices, etc.) and “going from one box to another” without the faintest connection to the fundamentals of life.

—

This week I came across a book from 1935 titled Five Acres and Independence: A Practical Guide to the Selection and Management of the Small Farm. What strikes the modern reader is not the level of detail used or the pragmatic nature of the text, but how foreign its contents. Topics like water, soil, crops, and sewage—the essential fabrics of sustaining our everyday lives—are described in terms that, although meant to be familiar, are lost on contemporary urbanites. Loam, humus, baffle boards, pistillate, strawberry runners—my lack of recognition is almost embarrassing. Yet why would we recognize these? Many of us live in a world of concrete and steel, where “agriculture” is performed in far-away places stigmatized as “backward.” The word “landscape” is usually followed by “architecture” or “photography,” rather than reports of water drainage and nitrate levels. We are estranged from the physical world that humans are adapted to inhabit.

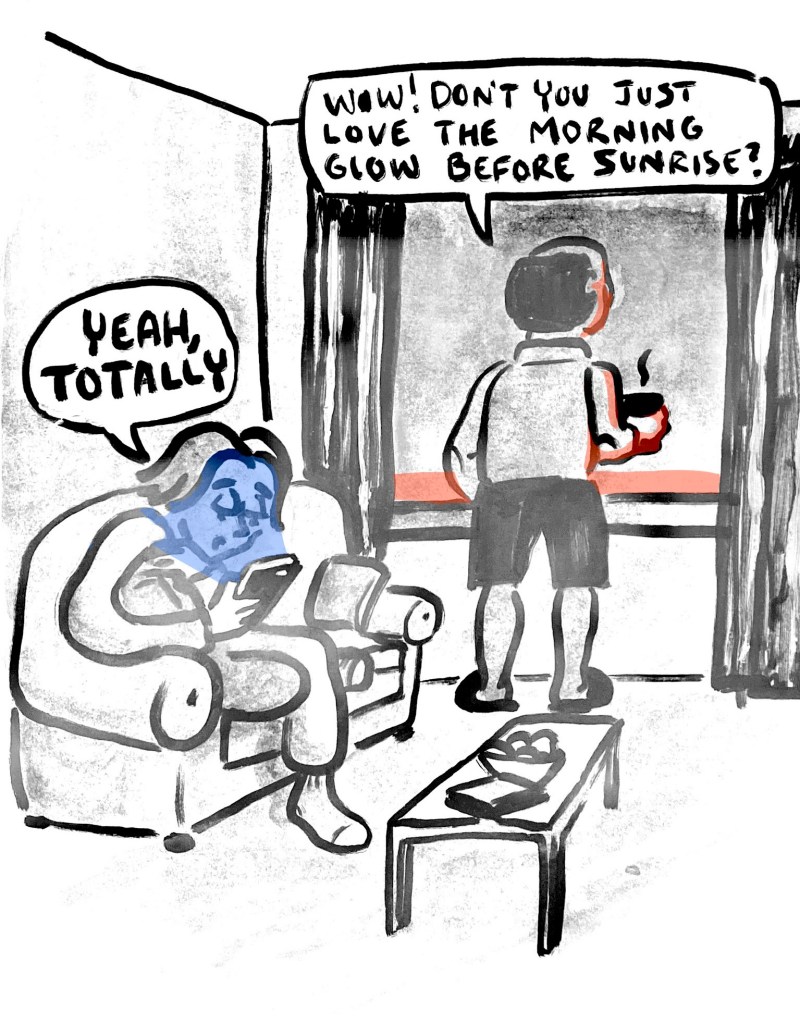

Our modern experience has found itself balancing convenience with community. Not always (but often), these two C’s are pitted against one another—progress on one side and connection on the other. They are not mutually exclusive, but intertwined. Like all things, there are trade-offs to our choices, and as history teaches, societal trends ebb and flow from extremes. Perhaps our path to convenience is reaching a pivot point back to community. Or perhaps there is a third way—one that harmoniously blends human interaction with digital conveniences. Regardless, here is one take that summarized this idea more clearly than I have:

Technology, even really the most rudimentary technologies, are a double-edged sword. And so the sense of community that we had by going to the grocer more often, by being outside on our front porch because we didn’t have A/C inside the house—that sense of connectedness with our community and our neighborhoods just doesn’t exist anymore. Everybody’s inside their boxes, and they’re going from one box to another.

What I believe the cultural shift that’s happening is a reach back to that sense of community. I think the younger generations are realizing that owning more stuff didn’t lead to more happiness; their parents aren’t happier, because they had more stuff or more space. But being connected to people [is different], especially now that you have the tease of having social media making you feel connected but not really.

—Stonly Baptiste, Co-founder & Partner at Urban Us venture fund, TechCrunch Disrupt Conference (2017)

Cavemen and Computers: A Success Story

The reasonable man adapts himself to the world: the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.

The reasonable man adapts himself to the world: the unreasonable one persists in trying to adapt the world to himself. Therefore all progress depends on the unreasonable man.

—George Bernard Shaw, Man and Superman (1903)

Indeed, if the same mistake is repeated over and over again, what is the point of being persistent?

—Fang Wu & Bernardo Huberman, “Persistence and Success in the Attention Economy” (2009)

If nothing else, humans are two things: (1.) We are tool builders, constantly adapting to new environments by creating new dwellings, clothing, modes of transportation, and societies. And (2.) we are runners (yes, runners). It is our defining ability to run that is perhaps the most fundamental aspect of success. Rather than learning from contemporary masters or fighting through trial and error, perhaps the lessons of success can be best learned from the rise of the most successful species on earth—ourselves.

Excluding the use of man-made vehicles, Homo sapiens are still the fastest animals on earth (over long distances… on land… if it’s hot enough outside). Yes, we are natural born runners, and this extremely specialized skill is the reason we stand on two legs, are relatively hairless, perspire rather than pant, and why our butts look so darn good. But before our brains grew large and humans reigned supreme, our early hominid ancestors used their unique physiology to their advantage over their knuckle walking cousins.

Persistence hunting—chasing prey until sheer exhaustion—is thought to be the primary reason for our running abilities. Our prehistoric relatives (and even some indigenous peoples of modern day) weren’t faster or stronger than other creatures, but they would chase much quicker animals, such as wildebeest, zebra, and deer, for one or two days until the animals simply collapsed from exhaustion. It is even proposed that the rich protein diet afforded by persistence hunting is what allowed for developing larger brains in humans. Therefore, the first lesson in our story is that persistence is the key to success—a lesson as true in the digital age as it was back then.

Microsoft, arguably the most successful company of the 1990s, was such a juggernaut that at the turn of century federal judges felt obligated to break up the monopoly. What made Microsoft so successful? In a word, persistence. Steve Jobs, in a rare 1995 interview, emphasized Microsoft’s persistence, saying:

Microsoft took a big gamble to write for the Mac. And they came out with applications that were terrible. But they kept at it, and they made them better, and eventually they dominated the Macintosh application market, […] they’re like the Japanese; they just keep on coming.

Even Microsoft co-founder, Bill Gates, acknowledges persistence as the key to his personal and professional success. According to Gates, the best compliment he ever received was when a peer said to a group, “Bill is wrong, but Bill works harder than the rest of us. So even though it’s the wrong solution, he’s likely to succeed.” Just by keeping at it, Gates achieved an elite level of entrepreneurial accomplishment. But while persistence may be the key to success, it is not a panacea to cure all ills. Persistence can be misguided.

—

Being the best long-distance runners didn’t stop us from inventing the bicycle or the locomotive or the space shuttle. Humans separated themselves from other hominids through our ability to adapt—to build the tools we needed to thrive. At a certain point, our ancestors spread across the globe, adapting to changing environments. Early humans built clothes and dwellings to survive the polar ice; they developed agriculture to create stability where there was scarcity; and they developed civilizations and law and order to manage increasing tribal size. Therefore, the second lesson of our story is that we must adapt to an every-changing environment, in order to succeed.

The two lessons of human success may seem contradictory—persist but always be changing; however, it’s a matter of balance. Peristence and adaptability are equally important, but persistence is broad; it’s goal-oriented. Adaptability is detailed; it relates to our behavior, the details of how we attain our goals. Finding a balance between the two is extremely difficult to achieve in practice. We often get caught in either the wrong goals or misunderstand right ones.

Warren Buffett once wrote to his shareholders, “When an industry’s underlying economics are crumbling, talented management may slow the rate of decline. Eventually, though, eroding fundamentals will overwhelm managerial brilliance.” He was talking about the newspaper industry back in 2006, and his comments serve as important distinction between productive persistence and blind stubbornness—a distinction that goes beyond newspapers.

Kodak was in the photography business, yet, they lost site of their true goal, confusing it for a film business, and failure followed. The modern world of healthcare is criticized to have the same problem—promoting health care where they should promote health. It is easy to write that we should persist in our goals, but much harder to clearly define them; however, we are all decedents of the most successful creatures in the history of earth. Therefore, perhaps we are all bred to be successful (one persistent, yet adaptable, step at a time).

—

Asch, David A., and Kevin G. Volpp. “What Business Are We In? The Emergence of Health as the Business of Health Care.” New England Journal of Medicine 367.10 (2012): 888-889. (Source)

Carrier, David R., et al. “The Energetic Paradox of Human Running and Hominid Evolution.” Current Anthropology 25.4 (1984): 483-495. (Source)

Wilson, Edward O. The Social Conquest of Earth. WW Norton & Company, 2012.

Beliefs (or lack thereof)

What is religion? It’s community, history, cultural identity; it’s a way to make sense of the world. Some of us use religion as our primary source of answers; others use mysticism; others: science; and still others use a combination of methods. This is not an argument for one way over the others, but rather a look at beliefs through several different lenses:

What is religion? It’s community, history, cultural identity; it’s a way to make sense of the world. Some of us use religion as our primary source of answers; others use mysticism; others: science; and still others use a combination of methods. This is not an argument for one way over the others, but rather a look at beliefs through several different lenses:

We all try to make sense of the world. Our methods may differ, but we are all seeking to understand the same universe:

—Ross Douthat, author of Bad Religion, speaking on Real Time with Bill Maher (2012)

Religion plays a comforting role for some people:

—Zuckerman et al., “The Relation Between Intelligence and Religiosity” (2013)

But the comfort of belief is not confined to religious doctrine:

—Ross Douthat, author of Bad Religion, speaking on Real Time with Bill Maher (2012)

It is becoming more difficult to understand a world defined by modern technology:

—Steve Jurveston, billionaire tech investor, “Acclerating Rich-Poor Gap,” Solve for X (2013)

But humans are resilient creatures: Make the world incomprehensible, and they will find a way to comprehend it:

—Mark Manson, author, “The Rise of Fundamentalist Belief” (2013)

While we are getting better at adapting to the ever-changing world…:

—Thomas Friedman quoting Astro Teller, CEO of Google’s X Research & Development Laboratory

…the world is changing at an ever-increasing rate:

—Ray Kurzwell, computer scientist,“The Law of Accelerating Returns” (2001)

Technology may soon make everyone feel as if they’ve lost control:

And while some people think the world is still divided into those who “understand” and those who don’t…:

—Steve Jurveston, billionaire tech investor, “Acclerating Rich-Poor Gap,” Solve for X (2013)

…technological advancement will eventually humble even our brightest minds:

—Astro Teller, CEO of Google X, quoted in Thank You for Being Late (2016)

For, at the end of the day, we are all humans (no matter how intelligent). And that is the thesis of this entire “Too Smart for Your Own Good” series:

You are never too smart to be humble.

Intelligence does not make a person immune to faulty logic, insensitivity, poor timing, or technological change. There are biological limitations to being human. However we choose to make sense of the world—whatever strategies for life we employ—we are making a personal choice. So remember: “Judge not, lest ye be judged.”

Additional Reading:

Urban, Tim. “Religion for the Nonreligious.” waitbutwhy.com (2014): (Source)

Sharov, Alexei A., and Richard Gordon. “Life before earth.” arXiv preprint arXiv:1304.3381 (2013). (Source) (Summary)

Zuckerman, Miron, Jordan Silberman, and Judith A. Hall. “The Relation Between Intelligence and Religiosity: A meta-analysis and some proposed explanations.” Personality and Social Psychology Review 17.4 (2013): 325-354. (Source)

—

This is the fifth installment of a series titled “Too Smart for Your Own Good.”

The Benefits of Doubt

This is a story of two brainiacs—both pioneers in their chosen fields, both knowingly intelligent, and both recipients of the Nobel Prize. Despite their similarities, the stories of William Shockley—and his eventual demise—and Daniel Kahneman’s ultimate redemption differ in each genius’s relationship with self-doubt. While doubt is often cast as the villain of our lives, this is a different tale, one where doubt is the protagonist, the saving grace. This is a story of two men whose success and failure hinged on the benefits of doubt.

—

William Shockley grew up in California during the 1920s, receiving a B.S. from California Institute of Technology in 1932. Quantum physics was still a fresh discovery, and Shockley reportedly, “absorbed most of it with astonishing ease.” In 1936, he earned his Ph.D. from M.I.T. and began his innovative career at Bell Laboratories, one of the most prolific R&D enterprises in the world. As a research director at Bell, Shockley helped invent the transistor (as in “transistor radio”), for which he received the Nobel Prize in Physics in 1956—an achievement that would lead to his eventual undoing.

That same year, Shockley moved back to Mountain View, California to start Shockley Semiconductor Laboratory, the first technology company in what would become Silicon Valley. As a researcher and a manager, William Shockley was brilliant, innovative, but domineering. His arrogance at Shockley Labs was a point of contention among the employees and a problem that would only be exacerbated after the Nobel Prize. Winning the prize seemed to eradicate any residual self-doubt left from his youth. Shockley became deaf to outside opinion, blind to reason, and unforgivingly egotistical. In 1957, less than a year after becoming a Nobel Laureate, eight of Shockley’s best and brightest left the company to form Fairchild Semiconductor.

The Shockley schism was in large part due to William Shockley’s unwillingness to continue research into silicon-based semiconductors despite his employees’ belief that silicon would be the future. His hubris would be his end, as Shockley Labs never recovered from missing the silicon boom. The cohort who left would later create numerous technology firms in Silicon Valley (including the tech giant, Intel), giving birth to what has become one of the most innovative regions in the world.

William Shockley, himself, faded into obscurity, estranged from his children, his reputation tarnished after years of public touting of eugenics. Along with Henry Ford, Pablo Picasso, Albert Einstein, and Mahatma Gandhi, Time Magazine would name William Shockley as one of the “100 Most Important People of the 20th Century.” Yet he died bitter and disgraced in 1989, still headstrong, self-doubt still absent from his life. For Shockley, arrogance and intellect seemed to be inextricably linked; however, such a fate is not inevitable.

—

Daniel Kahneman’s story is different. It’s one where self-aware intellect meets a healthy dose of self-doubt. Born in 1936, Kahneman grew up a Jew in France during the Nazi occupation. His family was displaced several times and his experiences with Nazis would show him firsthand the complexities and peculiarities of the human mind—a foreshadowing of his illustrious career in psychology.

As an adolescent after World War II, Kahneman moved to Palestine where in eighth-grade he finally found like-minded friends. Acknowledging both his intellect and its dangers, he writes, “It was good for me not be exceptional anymore.” While Kahneman knew he was smart, he always saw his deficits (and sometimes to a fault). As Michael Lewis, author of Moneyball and The Undoing Project, puts it, “Everything [Daniel Kahneman] thinks is interesting. He just doesn’t believe it.” And this self-doubt would lead directly to his success.

After getting degrees from Hebrew University and U.C. Berkley, Kahneman’s work in psychology would take off after his first collaboration with Amos Tversky. Between 1971 and 1981, Tversky and Kahneman would publish five journal articles that would be cited over 1,000 times. Their work in cognitive biases—largely fueled by the ability to doubt their own minds—has been instrumental in upending the long-standing belief that humans are purely rational creatures.

In 2002, Kahneman was awarded the Nobel Prize in Economics for showing that even the brightest among us make mental mistakes everyday—prospect theory, cognitive biases and heuristics. Like William Shockley, winning the Nobel Prize would be a turning point in Kahneman’s life; unlike Shockley, the prize made Kahneman better not worse. Michael Lewis again comments, “[T]he person who we know post-Nobel Prize is entirely different from the person who got the Nobel Prize […] he is much less gloomy, much less consumed with doubts.” In a stroke of irony, it would take what is arguably the most prestigious award in the world to finally provide Kahneman with the validation that his thoughts on doubt are worthwhile.

Kahneman’s career has been largely focused on the benefits of doubt—the idea that humans may be mistaken in our confidence and intuition, and need to question our assuredness. While his legacy is still to be seen, Kahneman’s work may prove to change how humans think about thinking forever. His impact may even put him on Time’s list of “100 Most Important People of the 21st Century.”

—

The stories of Shockley and Kahneman serve as both a warning and a call to action. Doubt should not be seen as a curse, but rather a necessity—a blessing, even. It is when we are not in doubt that we should take notice. Arrogance at any level can blind us. We don’t have to be a savant to be egotistical or have a “big head” (yet the smartest among us are often guilty of this). Only through our doubts can we expect to learn from others, question our assumptions, and, ultimately, be successful long-term. As soon as we stop doubting, stop questioning, we stop growing.

—

This is the fourth installment of a series titled “Too Smart for Your Own Good.”